TL;DR

AI isn’t destroying most jobs (yet) – but it’s draining many of them of meaning.

What’s left, we call Hollow Jobs: roles that exist on paper while their thinking work has been handed to machines. Research suggests the damage becomes visible after roughly 18 months: skill loss, declining motivation, rising turnover. And the most insidious part: the people affected are the last to notice. The good news: hollowing is preventable, if organisations deliberately design how humans and AI work together. This article lays out the mechanisms, the timeline, and six concrete countermeasures.

Hollowing of Work: What does this really mean?

Imagine arriving at work in the morning – and there’s nothing left to think about

You still have a desk. A salary. A title. But the things that once made your job interesting – working through a tricky analysis, developing your own approach to a problem, making a decision that hinges on your judgement – a system handles all of that now. You review what the AI suggests. You nod. You click “Approve.”

Sounds exaggerated? For many knowledge workers, this is already everyday reality.

The public debate about artificial intelligence and work has circled around a single question for years: Will AI replace my job? In most cases, the answer is: No. At least not immediately. Not entirely. Though that’s unlikely to reassure many people.

The OECD estimates that 27% of all jobs are in occupations at high risk of automation (OECD, 2025). The Brookings Institution calculates that roughly 30% of all US workers could see at least half their tasks disrupted by generative AI (Brookings Institution, 2024). But the mass job loss many fear hasn’t materialised.

The truly pressing question is a different one: What’s left of your job once AI takes over the actual value?

What we’re all observing right now – on LinkedIn, across social media, in our own jobs – isn’t displacement. It’s hollowing. A gradual gutting of work that structurally persists but loses its cognitive core: the thinking, creating, judging, problem-solving.

The technical term: Hollowing of Work.

The result of this process is what we call Hollow Jobs – jobs without substance.

This is something fundamentally different from job loss. Your job still appears in the org chart. Your salary still arrives. But what made the work meaningful – autonomy, the experience of competence, creative engagement – all of that disappears. What remains is a shell.

A hollow job is a role that continues to exist structurally, but whose meaningful cognitive work – independent thinking, creating, and judging – has been delegated to AI systems. The human shifts from maker to approver, from problem-solver to reviewer of AI output.

Meaning HOLLOW JOB

Why work becomes hollow: The Three-Layer Model

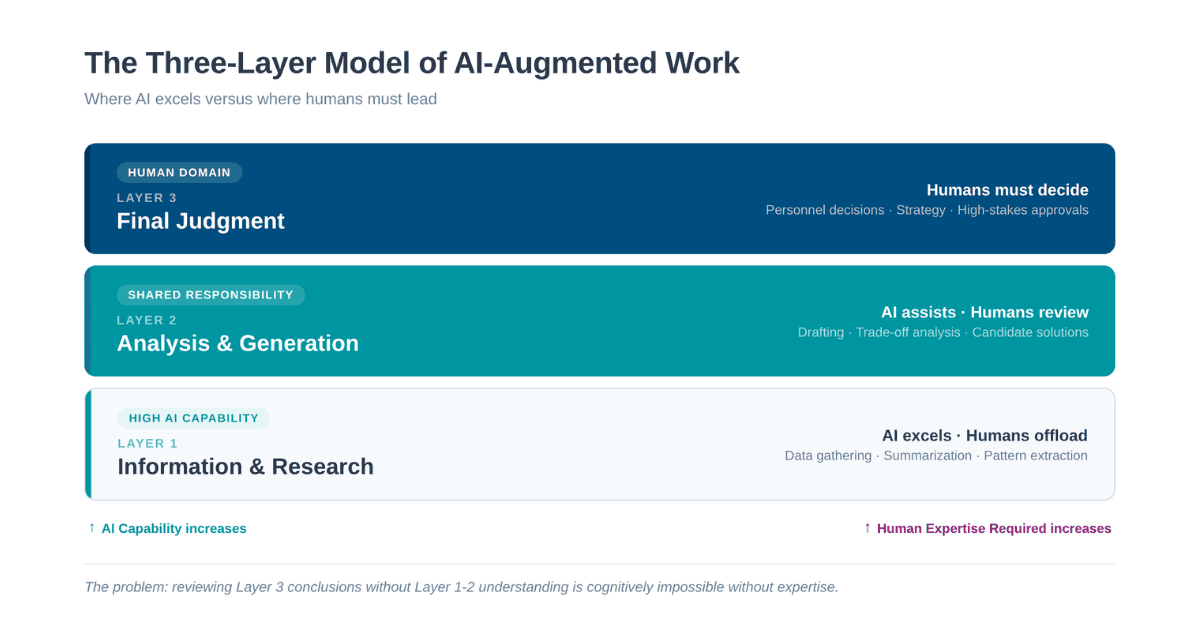

To understand why hollowing isn’t accidental but follows a structural pattern, it helps to look at the three layers on which knowledge work creates value.

We call it the Three-Layer Model of AI-Augmented Value Creation:

Layer 1: Information and Research

AI systems excel here. Gathering data, summarising sources, extracting patterns – these tasks are bounded and verifiable. Delegating them to AI saves time without much risk.

Layer 2: Analysis and Generation

AI systems are useful here, but fallible. They can draft options, analyse trade-offs, generate text or code. Quality varies. Humans should review and validate.

Layer 3: Final Judgement

AI systems are disqualified here. Personnel decisions, strategic direction-setting, ethical trade-offs, career-defining calls – these require human discretion. Humans must decide.

The problem arises at the interface: humans are expected to make Layer 3 judgements without having gone through Layers 1 and 2 themselves.

Think about what that concretely means: you’re supposed to evaluate an analysis you didn’t produce. Review a line of reasoning you didn’t develop. Make decisions based on information you didn’t gather.

Can you do that? Perhaps, if you bring years of experience. Otherwise, it’s cognitively impossible – not because of a lack of intelligence, but because judgement is built on experience. And those who no longer gain that experience lose the foundation for judgement.

What happens next is entirely predictable: review becomes rubber-stamping. Oversight becomes performative. And responsibility becomes a formality.

Three mechanisms that hollow out work from the inside

Hollowing doesn’t happen through a single event. Three mechanisms reinforce each other – quietly, gradually, and often invisibly.

1. The Expertise Paradox: A vicious cycle

There’s a circular trap we call the Expertise Paradox. At its core, it explains why AI-augmented leadership and oversight are so difficult in the long run:

You need expertise to control AI. But using AI prevents exactly the practice that builds and maintains that expertise.

That sounds abstract at first glance. It isn’t.

Software developers who rely heavily on AI code generation score 17% lower on comprehension tests for new programming languages than colleagues working without AI assistance (Anthropic, 2026). In medicine, a study shows that endoscopists who routinely work with AI-assisted polyp detection exhibit a six-percentage-point lower detection rate when the technology is suddenly removed – after just a few months of dependency (The Lancet Gastroenterology & Hepatology, 2025). Lawyers work faster with AI but lose the drafting practice that sharpened their legal judgement.

The pattern repeats across industries – the domain changes, the mechanism stays the same.

This is particularly challenging for early-career professionals. Junior developers who work in AI-augmented workflows from day one skip exactly the slow, error-prone, sometimes frustrating learning that builds expertise in the first place. Experienced professionals can use AI as an accelerator because they have a foundation. Junior staff never build that foundation at all.

For leaders, this means: No, AI cannot replace leadership. But AI can erode the competence base on which good leadership rests. Leaders who no longer understand how work functions in detail – because AI has taken over the operational layer – lose the foundation for strategic decisions. After two years, the question really is no longer whether AI replaces leadership. The question is whether leaders retain the expertise that makes leadership possible.

2. The 30-Minute Problem: Why monitoring fails

Let’s say your organisation handled the AI rollout well. People review the output. There are approval processes. Sounds solid? Unfortunately, it often isn’t – for a reason cognitive research has documented for decades: Vigilance Decrement.

When your task is to monitor AI-generated output and only intervene when errors occur, your detection accuracy measurably declines within the first 30 minutes. Response time increases. This isn’t a matter of discipline – it’s a property of our attentional system under conditions of low event density, which is precisely the situation when a good AI system rarely makes mistakes (Thackray & Touchstone, 1989, in: Ergonomics; Frontiers in Cognition, 2025: “The Vigilance Decrement: Its First 75 Years”).

Air traffic control drew consequences from this long ago: a maximum of two hours of uninterrupted monitoring, then a mandatory switch. In knowledge work, where people spend hours reviewing AI-generated code, text, legal analyses, or financial models? No limits. No regulations. No break rules. That this can’t work in the long run should be obvious.

Researchers from BCG and Harvard Business Review recently coined the term “AI Brain Fry” for the resulting state of exhaustion – mental overload from excessive monitoring and coordination of AI systems (BCG/Harvard Business Review, 2026). As one study participant described it: it’s like having twelve browser tabs open in your head, all competing for attention at the same time.

And that comparison resonates with everyone – you don’t need to be an executive to feel it.

3. The invisible erosion: We don’t notice it happening

The third mechanism makes hollowing particularly dangerous – because there’s no internal warning signal.

AI-augmented work simply feels better at first. Most tasks are faster. Results look more professional. The subjective sense of competence rises. Meanwhile, actual competence declines.

Researchers call this illusory competence – you believe you’re more capable than you are, because AI invisibly fills the gap. And the insidious part: AI assistance systems don’t just accelerate skill decay – they also prevent experts and learners from noticing that decay in themselves (Macnamara et al., 2024, in: Cognitive Research: Principles and Implications).

A study by Microsoft and Carnegie Mellon University (2025) confirms this: the more heavily knowledge workers rely on AI tools, the less critical thinking they engage – and the harder it becomes to summon critical thinking when needed (Microsoft Research & Carnegie Mellon University, 2025). Not because they’ve forgotten how. But because the cognitive habit fades. Let’s be honest: you recognise that too, don’t you?

The Centre for International Governance Innovation describes this process as Agency Decay – a gradual decline from initial experimentation through growing integration and dependency to what the authors call “chosen blindness”: people have lost the ability to work without AI, yet remain convinced they still could.

Get exclusive access to cutting-edge insights and expertly curated tools on digital evolution, cultural transformation, future-forward thinking, resilience, mindfulness, and the future of work.

The 18-Month Hypothesis

Here’s where it gets interesting: most AI workplace studies measure 3 to 12 months and report positive results. That’s the honeymoon phase. But our synthesis across four research streams points to a turning point at roughly 18 months: skill decay becomes noticeable, motivation declines, turnover intentions rise.

Why does the loss of meaning weigh so heavily? Research across 30 European countries shows that autonomy, competence, and relatedness have a 4.6 times stronger association with work meaningfulness than salary or job security (Cambridge Journal of Management and Organization, 2023).

In other words: pay rises don’t compensate for loss of meaning.

For CEOs, this means: if your organisation broadly adopted AI tools in early 2026, expect first skill effects by late 2027 and measurable motivation loss by early 2028. The moment to act isn’t when the problems become visible – it’s now (and that’s why we are here for you ;))

Augmentation over hollowing: Three conditions that make the difference

There are domains where technology has demonstrably made human work better – not worse. Robot-assisted surgery, computer-aided design in architecture: both cases show that augmentation can succeed. What they share:

1. The human remains the decision-maker

In the Da Vinci system, the surgeon operates. The system extends their capabilities without replacing their judgement.

2. Training pathways are rebuilt around the technology

Designed in from the start. Credentialing requires demonstrated hands-on competence.

3. The transition gets time and institutional support

CAD didn’t hollow out architecture – but the transition took decades, accompanied by curriculum reform.

Where these three conditions are absent – as is frequently the case with the rapid AI rollouts of 2024 to 2026 – hollowing risk rises substantially.

Six design principles to prevent hollowing

As we’ve seen, hollowing is a design problem: it doesn’t happen automatically when AI is introduced. It happens when AI is deployed in a way that reduces humans to reviewers rather than active participants. And how AI is deployed is a deliberate choice – made by leadership, by project teams, by organisational design.

In other words: those who design work differently (active involvement instead of passive monitoring, preserved learning pathways, protected autonomy) prevent hollowing. Which also means: it’s solvable by design. Six principles from research and practice:

1. Active Loop over Passive Monitoring

Human-on-the-Loop (oversight with intervention capability) paradoxically produces the worst outcomes – monitoring fatigue compounded by high-stress problem-solving during failures. Adopt Human-in-the-Loop instead: keep people actively embedded in decision loops. Cognitively more demanding, but it preserves competence and judgement.

2. Skill Maintenance and AI-Free Zones

Reserve 20 to 30% of working time in AI-intensive roles for tasks without AI support. Aviation knows this principle: pilots must regularly fly manually because competence decays without practice. Some companies are already experimenting with “Off-AI Days” where teams discover what they can no longer do without AI.

3. Cap Monitoring Sessions

Air traffic control limits monitoring shifts to two hours. For knowledge workers reviewing AI output, there are no comparable rules. Cap continuous AI output review, then switch tasks.

4. Measure What Actually Matters

Productivity metrics don’t capture whether your analyst still understands the assumptions behind the AI model. Add: skill tests (not self-assessment), experienced autonomy, sense of meaning, turnover intentions. Future skills like critical judgement, independent problem-solving, and the ability to work with and without AI – these are the competencies that will determine competitiveness.

5. Actively Protect Junior Talent

AI takes over exactly the routine work that traditionally trained early-career professionals: researching, drafting, making mistakes, learning from them. When juniors do nothing but generate output from day one, the competence ladder breaks. Emerging leader programmes must include phases of AI-free work.

6. Have the Ethical Leadership Conversation

Before implementing principles 1 through 5, your leadership needs a clear vision: What do we believe about the role of humans in our work? What are we willing to pay for that belief? This conversation has nothing to do with classroom philosophy – every AI decision implicitly answers these questions.

The EU AI Act: Well-intentioned, not resolved

The EU AI Act requires “meaningful human control” by persons with “appropriate competence” for high-risk AI systems from August 2026 (EU AI Act, 2024, Art. 14). Neither term is defined. And the Expertise Paradox exposes the gap: the regulation demands oversight competence but creates no conditions to maintain it.

For organisations in the German-speaking region, there’s a structural advantage: works councils have held explicit co-determination rights over AI deployment since 2021. These structures are a genuine asset – provided works council members understand the dynamics described in this article and can negotiate at a technical level.

What leaders must decide now about AI and work

We’ve covered a lot of mechanisms, paradoxes, and research findings in this article. But at its core, this is about something very simple: Do you want the people in your organisation to think – or merely to react?

That sounds like a rhetorical question. It isn’t. Because most AI implementations are currently answering it with “react” – silently, by default. Not out of malice, but because nobody explicitly asked the question. Speed and cost savings were the obvious goals, and the question of what meaning remains in the work simply wasn’t on the agenda.

Our experience from working with organisations in over 50 countries shows: the companies that navigate AI transformations well aren’t the ones with the best technology. They’re the ones whose leadership dares to have genuinely open conversations. And no, that’s not a given. You have to talk about what human work is worth in an organisation. About what you’re willing to invest to protect it. And about where you’ll deliberately move slower than the competition – because you know the shortcut will cost more in the long run.

These conversations are uncomfortable, we get it. But they’re the difference between organisations that still have their expertise in three years, and those that realise their teams somehow look productive… but nobody remembers why.

If reading this made you think about your own organisation: we help you make hollowing visible before it becomes a problem.

Frequently Asked Questions

What exactly is a Hollow Job?

A hollow job is a role that formally continues to exist, but whose meaningful cognitive work has been delegated to AI systems. The human reviews and approves rather than thinking and deciding. The job description remains. The substance is gone.

How does the Hollowing of Work differ from job loss?

Job loss means the position disappears. Hollowing means the position remains, but the work loses its cognitive core – and with it, its meaning. The result is a gradual draining of purpose, often invisible in productivity metrics.

What is the Expertise Paradox in the context of AI and leadership?

You need expertise to control AI. But using AI prevents exactly the practice that builds that expertise. The more you use AI, the less able you are to judge whether the AI is correct. Lisanne Bainbridge described this automation paradox as early as 1983 – it remains unresolved to this day (Bainbridge, 1983: “Ironies of Automation”, in: Automatica).

Can AI replace leadership?

No, definitively not. But it can erode the competence base on which good leadership rests. Leaders who lose contact with operational reality through AI delegation make poorer strategic decisions. The question isn’t whether AI replaces leadership, but whether leaders retain the expertise that makes modern leadership possible.

What can organisations concretely do to prevent hollowing?

Six design principles provide a framework: active decision loops instead of passive monitoring, structured skill maintenance, AI-free zones, monitoring session limits, measuring competence and meaning alongside productivity, and dedicated protection of junior development. Details can be found in the full report.

Which future skills will be decisive in the age of AI?

Critical judgement, independent problem-solving, the ability to question AI output rather than simply approving it, and the resilience to remain capable without AI support. Organisational resilience emerges when teams systematically build and maintain these future competencies.

What does Self-Determination Theory have to do with AI?

According to Deci and Ryan, intrinsic motivation rests on three basic needs: autonomy, competence, and relatedness. When AI systems constrain autonomy and undermine the experience of competence, the sense of meaning declines – regardless of salary. Non-monetary work factors are 4.6 times more strongly associated with work meaningfulness than income (Cambridge Journal of Management and Organization, 2023).

Develop AI leadership skills: The only learning journey for leaders that combines artificial intelligence, new leadership, and AI transformation.

Join our New Leadership Community:

We send you our monthly newsletter on leadership, culture, organization and technology. With exciting, curated inspiration for the new world of work.

Get in Touch

Contact Verena for personalized information on how to become more mindful as a leader.

You are currently viewing a placeholder content from Zoho Forms. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

More Information